Velox Documentation

Explore the technical architecture and implementation details of a high-performance leveraged trading engine.

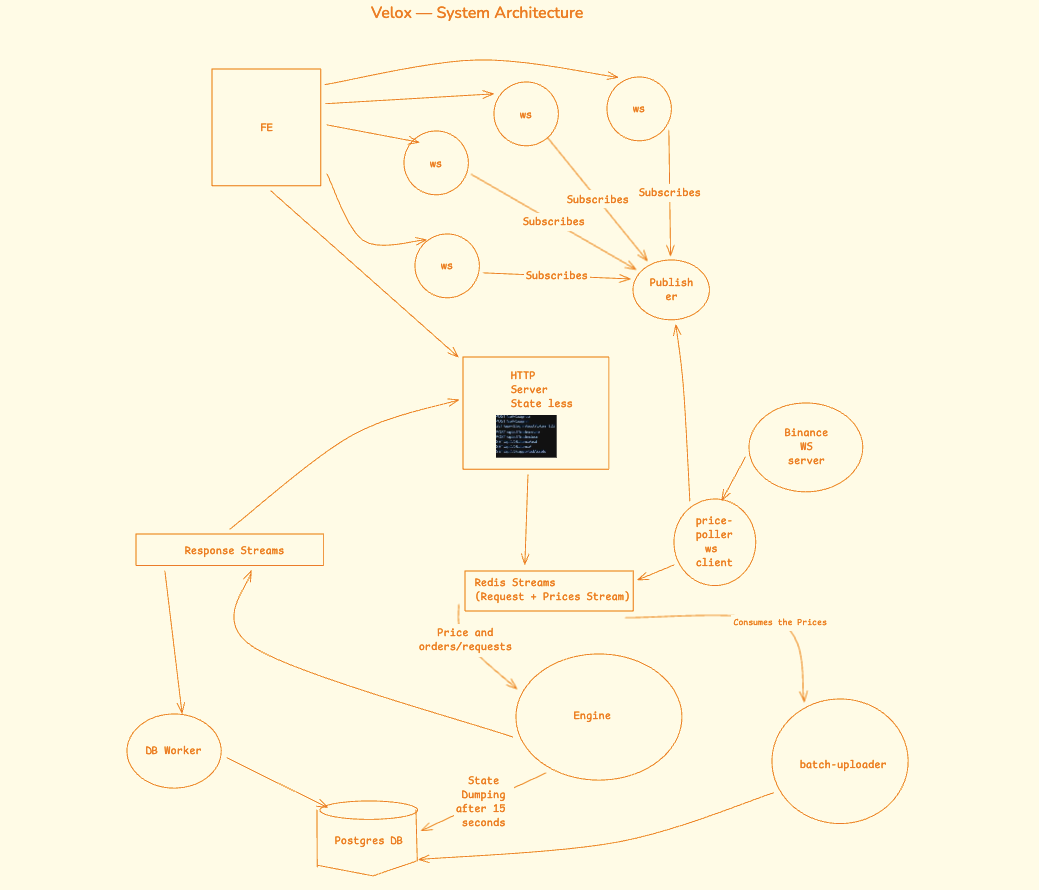

System Architecture

Velox follows a distributed microservices architecture with six services communicating through Redis streams. Real-time price data flows from Binance via WebSocket, gets processed by the liquidation engine with in-memory state, and triggers automatic liquidations based on leverage and risk parameters. All state changes are event-sourced for crash recovery.

Core Components & Implementation

Liquidation Engine

Order Processing

The engine processes orders through Redis streams with real-time price validation:

Liquidation Mechanism

Runs on every price tick with priority-ordered triggers:

Liquidation Price Formulas

Redis Stream Communication

request:stream

response:stream

response:queue

Price Poller & WebSocket Integration

Binance Connection

Connects to Binance for real-time trade feeds on three assets:

House Spread (0.1%)

A market-maker spread is applied to all prices before distribution:

Web Server & API Layer

Authentication

Dual authentication with JWT in httpOnly cookies:

EngineClient Service

Singleton for engine communication via Redis:

API Reference

Authentication

Trading

Market Data

WebSocket

Database Schema

User

ClosedOrder

Trade

Snapshot

Technical Deep Dive

Real-time Data Flow

Price Update Sequence

Order Creation Sequence

Request-Response Architecture

Async Communication Pattern

The system uses an async request-response pattern with Redis streams and an in-memory callback registry. This enables non-blocking communication while maintaining request-response semantics.

Callback Registration

Engine Processing Loop

Crash Recovery & Event Sourcing

Snapshot + Replay Strategy

The engine persists full state snapshots to PostgreSQL every 15 seconds, including the last processed stream entry ID.

Engine Lifecycle

BigInt Arithmetic (10⁸ Scale)

Why BigInt?

JavaScript Number uses IEEE 754 floating point, which introduces rounding errors in financial calculations. All prices, quantities, and margins use BigInt with 10⁸ scale factor.

Core Operations

Docker & Infrastructure

docker-compose.yml

Service Ports

Monorepo Structure

Apps (6 services)

Packages (shared libraries)

Ready to explore?

Start trading with $1,000 in virtual funds on a platform built with event sourcing, in-memory state, and real-time liquidation.